Quickstart#

Embedl Deploy keeps deployment preparation inside PyTorch.

You provide a model and a hardware-target pattern set, and

transform() rewrites the graph into a deployable form.

Core concepts#

Modules: PyTorch

nn.Moduleobjects (e.g.,ConvBatchNormRelu).Patterns: Hardware-aware graph patterns such as fusions, conversions and quantization placements. A pattern finds a subgraph and replaces it with a hardware-aware equivalent. Patterns are carefully determined by extensive exploration of the hardware toolchain, restrictions and verified

Transform: applies patterns to your model in one pass.

The public release contains TensorRT patterns, within the following groups:

Fusion patterns: operator fusions, e.g.,

Conv+BatchNorm+ReLUConversion patterns: structural rewrites replacing one layer with another

Quantized patterns: quantization-focused rewrites

The only requirement to run a transform is to pass a pattern set for your hardware target.

Usage#

import torch

from embedl_deploy import transform

from embedl_deploy.quantize import quantize

from embedl_deploy.tensorrt import TENSORRT_PATTERNS

from torchvision.models import resnet18 as Model

# 1. Load a standard PyTorch model

model = Model().eval()

example_input = torch.randn(1, 3, 224, 224)

# 2. Transform — fuse and optimize for TensorRT in one call

res = transform(model, patterns=TENSORRT_PATTERNS)

print("Model\n", res.model.print_readable())

print("Matches", "\n".join([str(match) for match in res.matches]))

# 3. Quantize (PTQ)

def calibration_loop(model: torch.fx.GraphModule):

model.eval()

for _ in range(100):

model(torch.randn(1, 3, 224, 224))

quantized_model = quantize(

res.model, (example_input,), forward_loop=calibration_loop

)

quantized_model.eval()

# 4. Export as usual (dynamo exported models may have compilation issues)

torch.onnx.export(

quantized_model, (example_input,), "model.onnx", dynamo=False

)

# 5. Quantization-aware training with a training loop

qat_model = quantized_model.train()

# Freeze BatchNorm, or apply other QAT utilities as needed

# train(qat_model)

# Compile

# -------

# Compilation can be done with TensorRT's trtexec tool, which can take the ONNX

# model and compile it for inference. The exported layer info and profile can

# be used for debugging, optimization and visualization.

#

# Note: that the ONNX model might need to be simplified with onnx-simplifier to

# make trtexec compile it. Dynamo exported models may have compilation issues,

# so it's recommended to export with dynamo=False.

#

# We are working on a Aten-based export path that should be more robust and

# support more models in the future.

# >> onnxsim model.onnx model.onnx

# >> trtexec \

# --onnx=model.onnx \

# --exportLayerInfo=layer_info.json \

# --exportProfile=profile.json \

# --profilingVerbosity=detailed

Visualization#

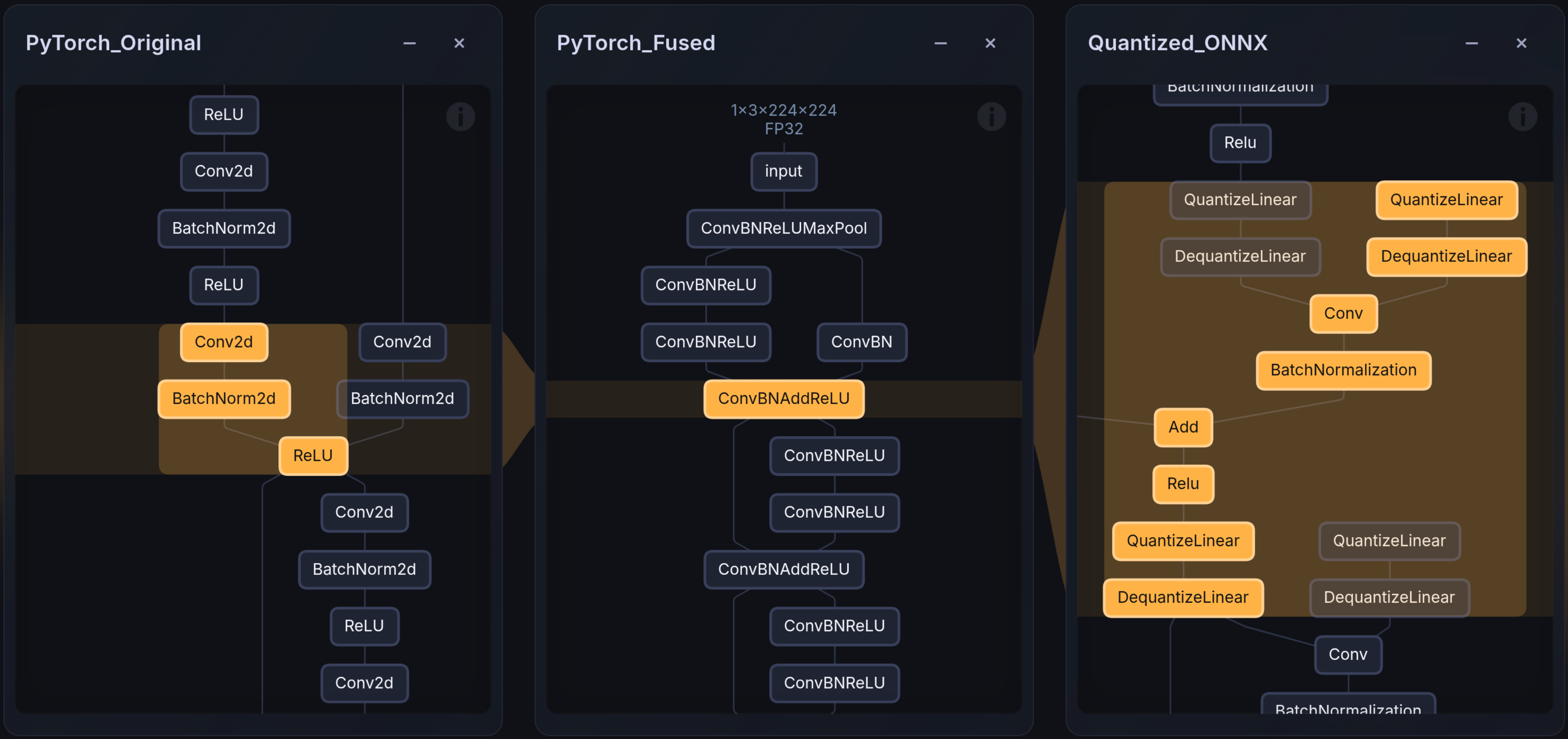

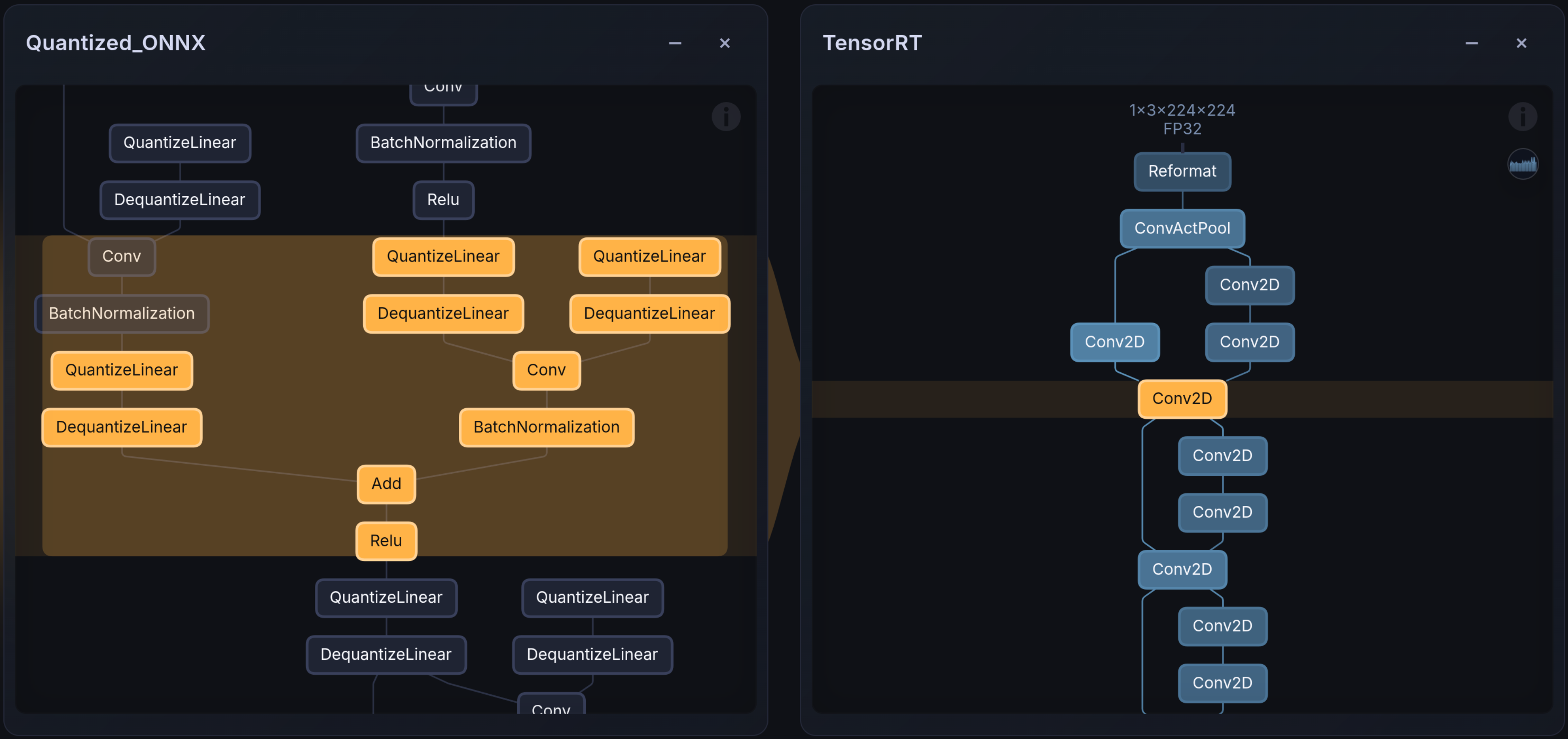

The Embedl visualizer is a graphical tool that can be used to render PyTorch graphs, ONNX models, and hardware-compiled artifacts (e.g., TensorRT compiled engines, TIDL models) side-by-side for comparison and debugging. It is available online for public use on the Embedl Hub and locally for enterprise solutions.

The images below show the layer mapping before and after TensorRT compilation.